I'm so happy to

finally be here, at the Networking part of the Public Cloud!!! I know, there

are more important parts of Cloud then Networks, but SDN is my true love, and

we should give it all the attention it deserves.

IMPORTANT: In this

post I will be heavily focusing on Google Cloud Platform. The concepts

described here apply to ANY Public Cloud. Yes, specifics may vary, and in my

opinion GCP is a bit superior to AWS and Azure at this moment, but if you

understand how this one works - you'll easily get all the others.

Virtual Private Cloud (VPC)

VPC (Virtual Private Cloud) provide global

scalable and flexible networking. This is an actual Software Defined Network

provided by Google. Project can have up to 5 VPC

- Virtual Private Networks. VPC can be global, and contains subnets and uses a private IP space. Subnets are regional. The network that you are provided with VPC

is:

- Private

- Secure

- Managed

- Scalable

- Can contain up to 7000 VMs

Once you create the

VPC, you have a cross-region RFC1918 IP Space network, using Googles private Network

underneath. It uses the Global internal DNS, load balancing, firewalls, routes,

and you can scale rapidly with global L7 Load Balancers. Subnets within VPC can

only exist within Region/Zone, you can't extend a Subnet over your entire VPC.

VPC Networks can be provisioned in:

- Auto Mode, where the Subnet(s) is set (automatically assigned) in every region. Firewall rules and routes are preconfigured.

- Custom mode, where we have to manually configure the subnets.

IP Routing and Firewalling

Routes are defined for the networks to which

they apply, and you can use them if you want to apply the route only for the

Instances with a certain "instance tag" (If you don't specify the

TAG, the route applies to all the instances).

When you use the

Routes to/from the Internet, you have 2 options:

- Many-to-one NAT, multiple hosts mapped to one public IP (known as SNAT in Cloud solutions such as OpenStack, check out my OpenStack post from some time ago for details about OpenStack Networking).

- Transparent proxies, that direct all external traffic to one machine (Floating IPs per OpenStack terminology).

Project can contain various VPCs (Google allows you to create up to 5 VPCs per project). VPCs also have Multi Tenancy. All the resources in GCP belong to some VCP. Routing and Forwarding must be configured to allow traffic within VPC, and with the outside world. You also need to configure the Firewall Rules.

VPCs are GLOBAL, meaning the Resources can span anywhere around the world. Even so, instances from different regions CANNOT BE IN THE SAME SUBNET. An instance needs to be in the same region as a reserved static IP address. The zone in the region doesn't matter.

Firewall Rules can be based on the Source IP (ingress)

or Destination IP (Egress) There are DEFAULT "allow egress" and

"deny ingress" rules, which are pre-configured for you, with the minimum priority (65535). This means that if you configure the new FW rules with the lower number/higher priority, these will be taken into account, instead of the default ones. GCP Firewall

rules are STATEFUL. You can also use TAGs

and Service Accounts

(something@developer.blabla.com for example) to configure the Firewall rules, and this is probably THE BIGGEST advantage of the Cloud Firewall, because you can do Micro Segmentation in a native way.

Once you create a Firewall Rule, a TAG is created, so the next time you create

an instance, and apply that rule, it will not create it again, just attach the

TAG to your instance.

There are 2 types of IP addresses in VPC:

- External, in the Public IP space

- Internal, in the Private IP space

VPCs can communicate to each other using a Public IP

space (External networks visible on the Internet). External IP can also be

ephemeral (change every 24 hours) or static. VMs don't know what their external

IP is. IMPORTANT: If you RESERVE an External IP in order to configure it as STATIC, and not use it for an Instance

or a LB - you will be charged for it! Once you assign it - it's for free.

When you work with Containers - containers need to focus on the

Application or Service. They don't need to do their own routing, it simplifies

the traffic management.

Can I use a single RFC 1918 space within few GCP Projects?

Yes, using a Shared VPC - Networks can be shared across

Regions, Projects etc. If you have different Departments that need to work on

the same Network resources, you'd create two separate projects for them, give the access only to the project they work on, and

use a single Shared VPC for the Network resources they all need to access.

Google Infrastructure

Google's network

infrastructure has three distinct elements:

- Core data centers (central circule), used for the Computation and Backend storage.

- Edge Points of Presence (PoPs), Edge Points of Presence (PoPs) are where we connect Google's network to the rest of the internet via peering. We are present on over 90 internet exchanges and at over 100 interconnection facilities around the world.

- Edge caching and services nodes (Google Global Cache, or GGC). Our edge nodes (called Google Global Cache, or GGC) represent the tier of Google's infrastructure closest to our users. With our edge nodes, network operators and internet service providers deploy Google-supplied servers inside their network.

CDN (Content Delivery Network) is also worth mentioning. It's enabled by Edge Cache Sites (Edge PoPs, or the light green circule above), the places where the online content can be delivered closer to the users for faster response times. It works with Load Balancing, and the Content is CACHED in 80+ Edge Cache Sites around the globe. unlike most CDNs, your site gets a single IP address that works everywhere, combining global performance with easy management — no regional DNS required. For more information check out the official Google docs.

Connecting your environment to GCP (Cloud Interconnect)

While this may

change in the future, a VPN hosted on GCP does not allow for client

connections. However, connecting a VPC to an on-premises VPN (not hosted on

GCP) is not an issue.

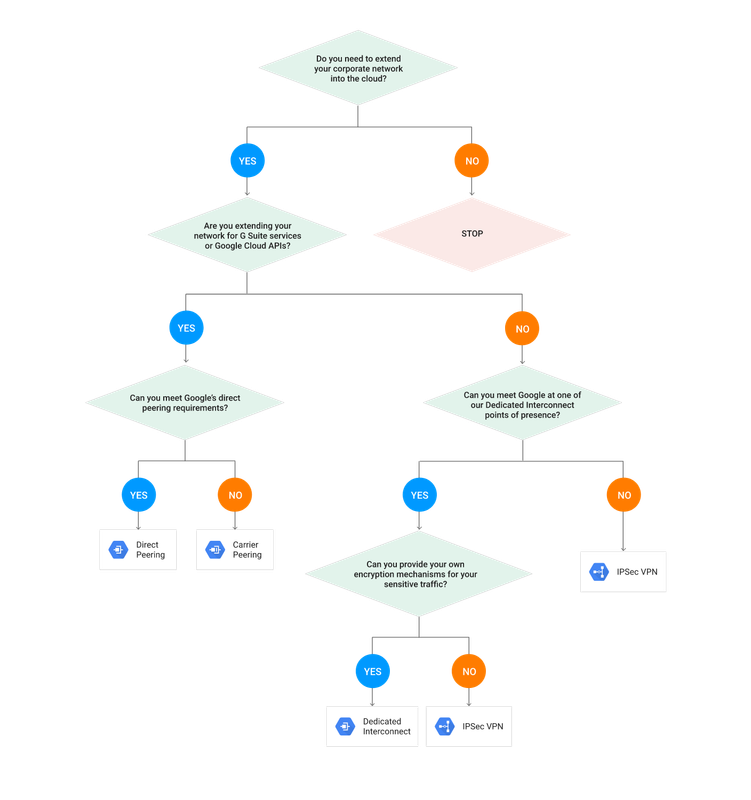

There are 3 ways you

can connect your Data Center to GCP:

- Cloud VPN/IPsec VPN, as in a standard Site to Site VPN IPsec session (supports IKEv1 and v2). Supports up to 1,5-3 Gbps per tunnel, but you can set up various to increase performance. You can also use this option to connect different VPCs to each other, or your VPC to other Public Cloud. Cloud Router is not required for Cloud VPN, but it does make things a lot easier, by introducing the Dynamic Routing between your DC and GCP, that supports BGP. When using static routes, any new subnet on the peer end must be added to the tunnel options on the Cloud VPN gateway options.

- Dedicated Interconnect, used if you don’t want to go via Internet, and you can meet Google in one of Dedicated Interconnect points of presence. You would be using Google Edge Location (you can connect into it Directly, or via Carrier), with Google Peering Edge (PE) device to which your physical Router (CE) connects [you need to be in the supported location - Madrid is included]. This is not cheap, currently around 1700$ per 10Gbps link, 80GB Max!

- Direct Peering/Carrier Peering, which Google does not charge for, but also - there is no SLA. Peering is a private connection directly into Google Cloud. It's available in more locations then Dedicated Interconnect, and it can be done directly with Google (Direct Peering) if you can meet Google's direct peering requirements (Requires you to have a connection in a colocation facility, either directly or through a carrier provided wave service), or via a Carrier (Carrier Peering).

And, as always, Google provides a Choice Chart if you're not sure which option is for you:

How do I transfer my data from my Data Center to GCP?

When transferring

your content into the cloud, you would use the "gsutil" command line

tool, and have in mind:

- Parallel uploads (-o, plus you need to set the parameters) are for breaking up larger files into pieces for faster uploads.

- Multi-threaded uploads (-m) are for large numbers of smaller files. If you have bunch of small files, you should group together and compress.

- You can add multiple Cloud VPNs to reduce the transfer time.

- gsutil by default will by default occupy the entire bandwidth. There are tools to optimize this. When it fails, gsutil will retry by default.

- For ongoing automated transfers, use a cron job.

Google Transfer

Appliance is a new thing, probably not in the exam, it allows you to copy all

your data, ship it to google, and they will load it to the Cloud for you.

Load Balancing in GCP

One of the most

important parts of Google Cloud, because it enables the Elasticity, much needed

in the cloud, by providing the Auto Scaling for the Managed Instance Groups.

Have in mind that

the Load Balancing services for the GCE and GKE work in a different ways, but

basically they achieve the same thing - Auto Scaling. Here is how this works:

- In GCE there is a managed group of instances generated from the same template (Managed Instance Group). By enabling a Load Balancing service, you're getting a Global URL for your Instance Group, that includes the Health check service launched from the Balancer to the Instances, which is the base trigger of the Auto Scaling.

- In GKE you'd have a Kubernetes Cluster, and the entire Elastic operation of your containers is one of the signature functionalities of the Kubernetes Cluster, so you don't have to worry about configuring any of this manually.

Let's get deeper

into the types of the Load Balancing (LB) service in GCP. Have in mind that you

should always have in mind the ISO-OSI model, and if you can provide the LB

service on the high level - go for it! This means that if you can do a HTTPS

Balancing, rather go for that then SSL. If you can't go HTTPS - go for SSL. If

your traffic is not encrypted - sure, go for TCP. Only if NONE of this works

for you, you should settle for the simple Network LB Service.

IMPORTANT: Whenever you are using one of the

encrypted LB Services (HTTPS, SSL/TLS), the Encryption terminates on the Load

Balancer, and then the proper Load Balancer established a separate encrypted

tunnel to each of the Active Instances.

There are 2 types of

Load Balancing on GCP:

- EXTERNAL Load Balancing, for an access from the OUTSIDE (Internet)

- GLOBAL Load Balancing:

- HTTP/HTTPS Load Balancing

- SSL Proxy Load Balancing

- TCP Proxy Load Balancing

- REGIONAL Load Balancing:

- Network Load Balancer (notice that the Network Load Balancer is NOT Global, only available in a single region)

- INTERNAL, for the inter-tier access (example - web servers accessing Data Bases)

No comments:

Post a Comment